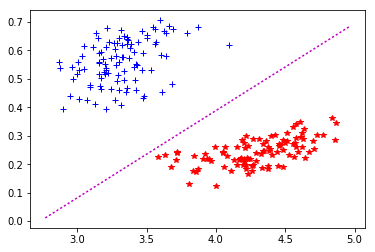

import matplotlib.pyplot as plt from sklearn import svm from sklearn.datasets import makeblobs from sklearn.inspection import DecisionBoundaryDisplay we create 40 separable points X, y makeblobs. SPACE_SAMPLING_POINTSįacecolor = 'orange', edgecolor = 'gray', alpha = 0.3)Īx. Plot the maximum margin separating hyperplane within a two-class separable dataset using a Support Vector Machine classifier with linear kernel. # Scale and transform to actual size of the interesting volume This is done using the marching cubes algorithm implementation from # scikit-image. # Plot the separating hyperplane by recreating the isosurface for the distance # = 0 level in the distance grid computed through the decision function of the # SVM. scatter(X_outliers, X_outliers, X_outliers, c = 'red') scatter(X_test, X_test, X_test, c = 'green')Ĭ = ax. scatter(X_train, X_train, X_train, c = 'white')ī2 = ax. # Plot the different input points using 3D scatter plottingī1 = ax. Le but d'un algorithme d'apprentissage supervis est d'apprendre la fonction h (x) par le biais d'un ensemble d'apprentissage : × o les sont les labels, est la taille de l'ensemble d'apprentissage, la dimension des vecteurs d'entre. # Create a figure with axes for 3D plotting La frontire de dcision est un hyperplan, appel hyperplan sparateur, ou sparatrice. # Calculate the distance from the separating hyperplane of the SVM for the # whole space using the grid defined in the beginning 16.14 SVM classifier: hyperplane optimization marge marge Hyperplan sparateur for discriminative classification and were originally proposed by Vapnik 58. # And compute classification error frequencies # Predict the class of the various input created before OneClassSVM(nu = 0.1, kernel = "rbf", gamma = 0.1) # Create a OneClassSVM instance and fit it to the dataĬlf = svm. # Generate some abnormal novel observations using a different distribution # Generate some regular novel observations using the same method and # distribution properties # Generate training data by using a random cluster and copying it to various # places in the space linspace(Z_MIN, Z_MAX, SPACE_SAMPLING_POINTS)) You could try imagining them as sort of streams of energy or particles at various points in space, the energy moves at high speeds and with special shields protecting the ships they can ride the streams to various destinations, carried by the force of the energy or particles, and the shield keeps the particles from tearing your ship apart by spreading the force of the impact along a greater area. So, why it is called a hyperplane, because in 2-dimension, it’s a line but for 1-dimension it can be a point, for 3-dimension it is a plane, and for 3 or more dimensions it is a hyperplane. Such a line is called separating hyperplane. linspace(Y_MIN, Y_MAX, SPACE_SAMPLING_POINTS), Separating Hyperplanes: In the above scatter, Can we find a line that can separate two categories. linspace(X_MIN, X_MAX, SPACE_SAMPLING_POINTS), Z_MAX = 5 # Generate a regular grid to sample the 3D space for various operations later A truly original idea, very helpful in a lot of situations and beautifully crafted. Sommaire dplacer vers la barre latrale masquer Dbut 1 Historique 2 Rsum intuitif 3 Principe gnral Afficher / masquer la sous-section Principe gnral 3.1 Discrimination linaire et hyperplan sparateur 3.2 Exemple 3.3 Marge maximale 3.4 Recherche de l'hyperplan optimal 3.4.1 Formulation primale 3.4.2 Formulation duale 3.4.3 Consquences 3.5 Cas non sparable: Astuce du. Hyperplan remains the most ingenious app I’ve seen in the last years. du SVM est base sur un hyperplan qui maximise la marge entre les chantillons et lhyperplan sparateur reprsent par h(x) wT x w0, o x (x1.

A set K Rn is a cone if x2K) x2Kfor any scalar 0: De nition 2 (Conic hull).

TRAIN_POINTS = 100 # Define the size of the space which is interesting for the example From only 40 / £25 / 33 (one-time fee) No-risk 60 day money back guarantee. The geometric interpretation of the Farkas lemma illustrates the connection to the separating hyperplane theorem and makes the proof straightforward. Let $g = g' - s\cdot(1,1,1\ldots,)$ it has the desired property.From mpl_3d import Pol圓DCollection

Draw a random test point You can click inside the plot to add points and see how the hyperplane changes (use the mouse wheel to change the label). This hyperplane could be found from these 2 points only. For example, let $C = \mathbb$ such that $\forall y\in C$ we have $g'\cdot y > s \geq g'\cdot f$. The optimal separating hyperplane has been found with a margin of 2.23 and 2 support vectors. Your statement of the problem admits the possibility that $f$ can be chosen to be a positive multiple of some element of $C$.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed